This post is me marvelling at one of the many approaches engineers on the early web took to optimize delivery of web applications on a global scale. I see this as an opportunity to get a deeper understanding of how the web works and explore the intricacies of the DNS protocol. Towards the end of the post, we will see this in action with Azure Traffic Manager.

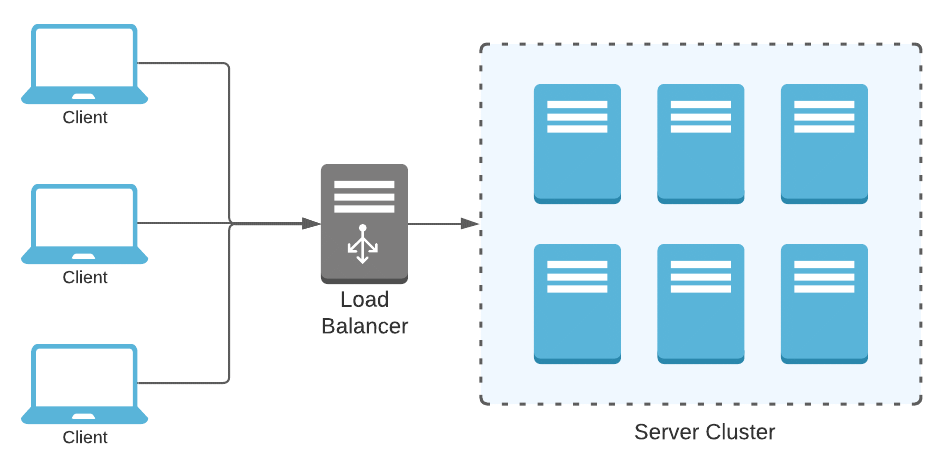

When running web applications at scale, load-balancers act as gateways to a cluster of homogeneous servers creating a reliable infrastructure. These load-balancers can route traffic to servers using algorithms as simple as round-robin or get complex and probe into server health metrics to choose the most reliable unit. Conventionally, load-balancers operate on layer 4 traffic peeking into TCP and UDP packets and routing them to the optimal destination. When speaking in context of Azure, it would be the Azure Load Balancer. Load-balancing does get a bit more nuanced once you think about scaling globally. This post will walk you through these nuances and help you appreciate the role that the DNS protocol plays here.

The need for global load-balancing

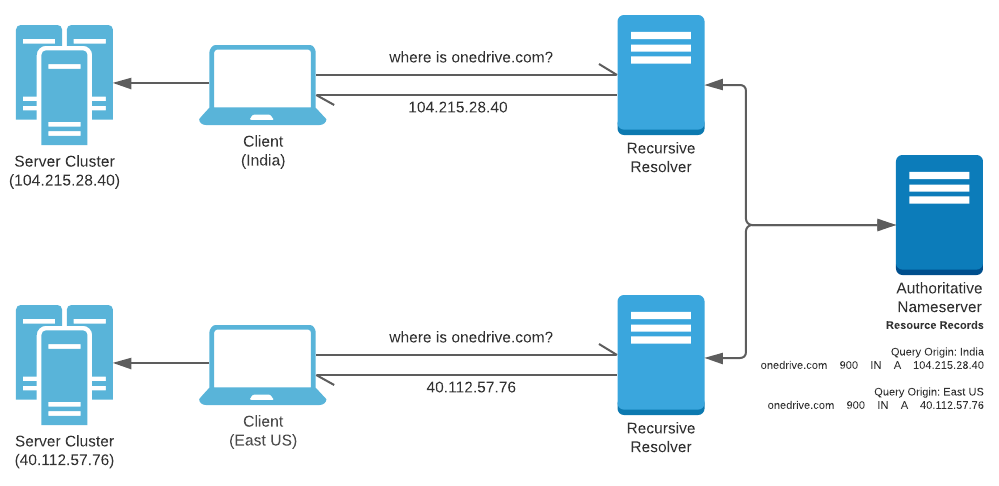

Consider an application like OneDrive that provides personal storage space to users across the globe. Running an application of this nature at a global scale needs more than just a single server cluster. An approach that accommodates geographically distributed clusters becomes essential along with the ability to process user requests at the topologically nearest cluster. This approach will ensure requests sizing up gigabits of data will spend less time on the network.

Conventional load-balancers act like a gateway and face all the traffic flowing towards the cluster. Geographical placement of the cluster behind a load-balancer is inconsequential as the topological distance on the network will vary only behind the load-balancer. This approach does not process requests over an optimal network route. A more appropriate solution would let users discover their topologically nearest cluster and process requests locally.

Clients looking to send a request to OneDrive should be able to discover the nearest cluster before the actual request is initiated. This is where DNS plays a interesting role.

DNS Background

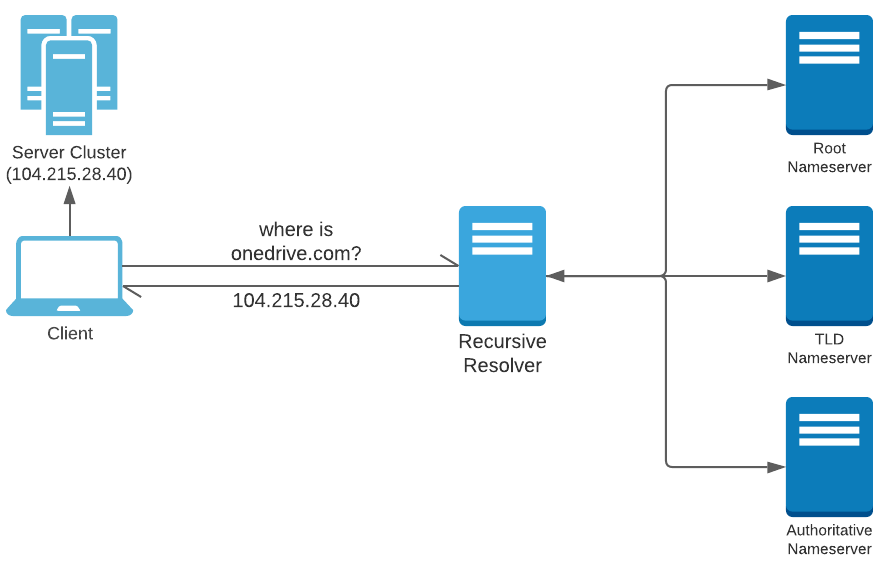

DNS servers help clients such as web browsers, mobile apps, etc. translate domain names to IP addresses where the actual HTTP request is routed. I will reiterate through the relevant DNS concepts in this post. Cloudflare has a nice article providing an overview if you are looking for more - what a DNS is and how it works.

There are many types of DNS servers of which the Recursive Resolver and Authoritative Name Server are relevant here. A Recursive Resolver is a DNS server that acts as a concierge for client applications. This server does not always hold the translation of the domain name to the IP address, but is capable of traversing through a series of DNS servers and reach the Authoritative Name Server which holds this translation.

When a DNS resolver starts looking for an IP address hosting a domain name, it may come across records redirecting it to other Name Servers (NS record) or Domains (CNAME record); but the search will always end with an IP address (A record) queried from an Authoritative Name Server. This result is then cached based on the Time-to-live (TTL) value associated with the record.

(RFC1034, RFC1035) published in 1987 formalized these key characteristics of the DNS protocol.

Load-balancing with DNS and challenges

DNS being the mediator, introducing clients to their destination IP address; can be leveraged to balance traffic being routed towards a list of IP addresses where the web application is accessible. For example - multiple A records are supported. Resolvers upon receiving Resource Records (RRs) for a Domain Name containing multiple A records can prioritize an IP address based on various algorithms. So it isn’t wrong to say that DNS inherently supports load-balancing.

Dynamically formed Resource Records

Web application administrators have little to no control on the load-balancing behaviour carried out by resolvers. Resolvers are selected by the clients and are usually owned by the ISP or a service that the client subscribes to. Furthermore, load-balancing via A records is restrictive in context of global scale. The ability to configure a regional domain alias (us.onedrive.com, in.onedrive.com, etc.) or regional name server is desirable due to the flexibility of procuring sub-domains as opposed to IP addresses.

Conventionally, Authoritative Name Servers are designed to respond to every query against a domain name with the same Resource Records. A global load-balancing control plane on a DNS server can only be achieved through Authoritative Name Servers generating algorithmic responses. For instance, an Authoritative Name Server can be programmed to respond with Resource Records based on the topological origin of the request. The responses continue to comply with established standards, but vary based on the origin of the request.

A global load-balancing control plane on a DNS server can only be achieved through Authoritative Name Servers generating algorithmic responses.

This is precisely how services like Azure Traffic Manager work. There are numerous approaches to load-balance using DNS. Here are few of the many approaches supported by Azure Traffic Manager.

- Geographic: Select Geographic routing to direct users to specific endpoints (Azure, External, or Nested) based on where their DNS queries originate from geographically. With this routing method, it enables you to be in compliance with scenarios such as data sovereignty mandates, localization of content & user experience and measuring traffic from different regions.

- Multivalue: Select MultiValue for Traffic Manager profiles that can only have IPv4/IPv6 addresses as endpoints. When a query is received for this profile, all healthy endpoints are returned.

- Subnet: Select Subnet traffic-routing method to map sets of end-user IP address ranges to a specific endpoint. When a request is received, the endpoint returned will be the one mapped for that request’s source IP address.

A full list of DNS routing algorithms supported by Azure Traffic Manager can be found here - Traffic Manager routing methods.

Origin of the DNS Query

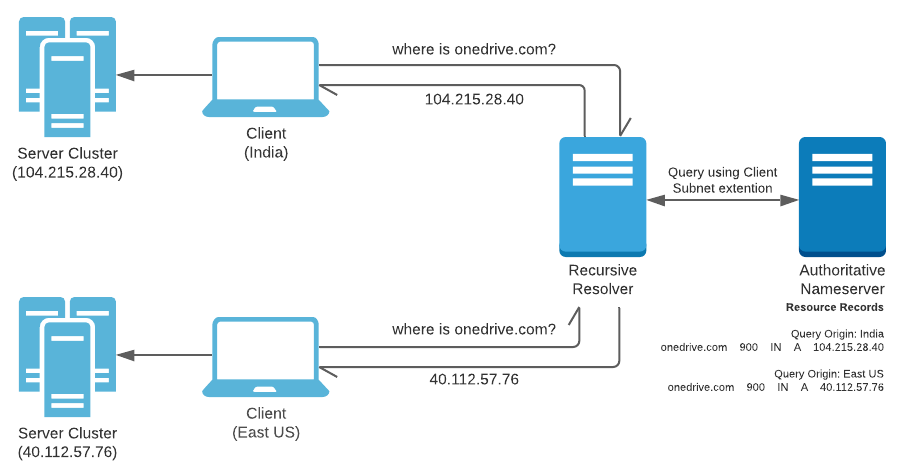

If you have paid close attention, you will notice that the query for Resource Records is made by an intermediate Resolver chosen by the client. The topological position of the Recursive Resolver is often close to the client, but users can choose/configure a Resolver that resides in a different country or continent for a variety of reasons. This leads the Authoritative Name Server in serving inappropriate Resource Records based on the Resolver’s location.

Since 2016, the DNS protocol supports an Extension Mechanism for DNS or EDNS0 (RFC6891) that is popularly used by intermediate Resolvers to add attach client subnet information to a DNS query. Such an attachment is called EDNS Client Subnet or ECS (RFC7871). This extension provides Authoritative Name Servers with the client subnet details from where the request originated and respond in context of this information while ignoring the address of the intermediate Resolver.

The effect being that the same Resolver will yeild different Resource Records based on the client’s subnet.

Bringing the DNS closer

With the implemention discussed above, Authoritative Name Server will still be accessible over a distant network for majority of global consumers. Most applications can deal with this level of inefficiency considering that the intermediate Resolver and the Authoritative Name Server share a few hundred bytes of data that eventually gets cached.

Authoritative Name Servers residing in the same region create a single point of failure in event of a disaster. Applications that wish to implement multiple failover regions in event of a regional blackout, value an optimized route to the Authoritative Name Server. Such applications find utility in implementing their Authoritative Name Servers over an Anycast network. Anycast is a routing method that allocates a single IP address to multiple servers. Client sending requests to the IP address usually end up connecting to the nearest server accessible on the IP address. Learn more about Anycast here.

So why not utilize Anycast for the server cluster itself? This is a valid use case, however Name Servers over Anycast are popular as they make it is easier to spin up a new cluster and route traffic to it by modifying records on a Name Server accessible over Anycast. Adding new clusters over an Anycast enabled IP address involves creating a new Anycast node, which is significantly more effort.

Load-balancing in action with Azure Traffic Manager

Azure Traffic Manager implements DNS based load-balancing using the techniques described above. Let’s take a look at it in action.

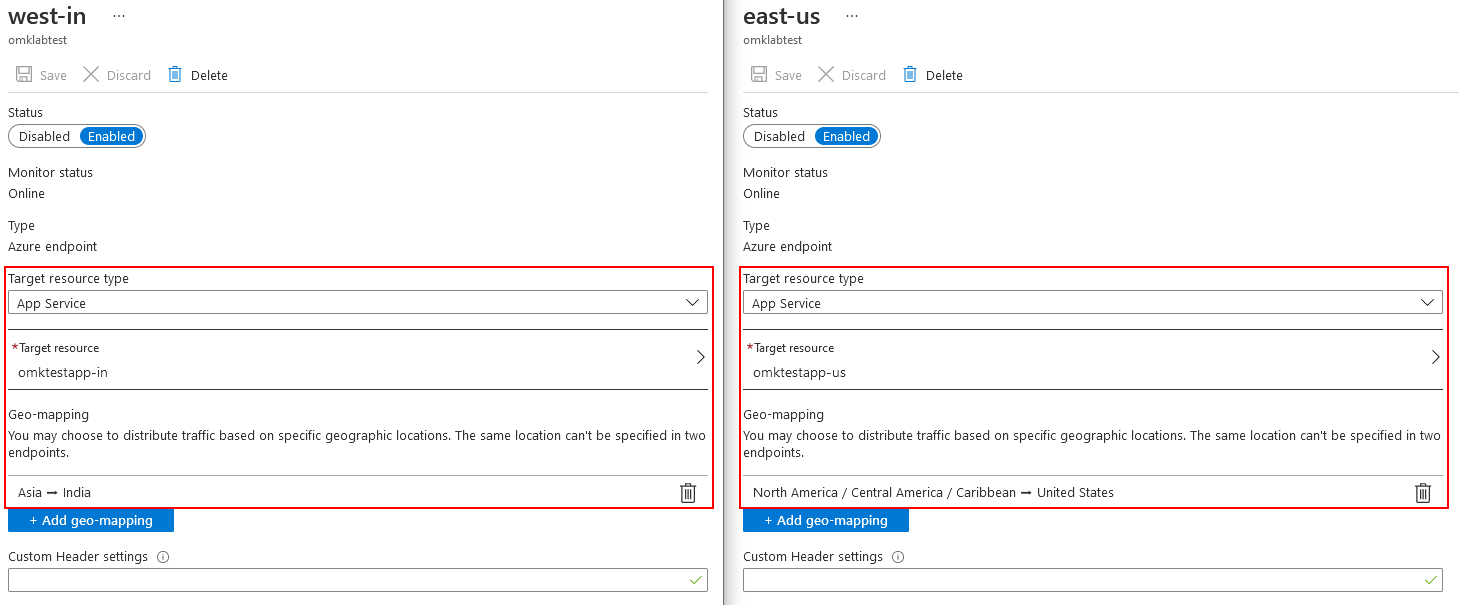

I have created a Azure Traffic Manager resource and configured the routing method as “Geographic”. There are 2 endpoints registered that look like this.

The result for DNS queries from clients in Pune, India and New York, US for the traffic manager domain name leads to the following results.

QUESTION SECTION:

omklabtest.trafficmanager.net. IN A

ANSWER SECTION:

omklabtest.trafficmanager.net. 0 IN CNAME omktestapp-in.azurewebsites.net.

omktestapp-in.azurewebsites.net. 0 IN CNAME hosts.omktestapp-in.azurewebsites.net.

hosts.omktestapp-in.azurewebsites.net. 0 IN A 52.136.50.1

Pune, INDIA (routed to South Asia datacenter)

QUESTION SECTION:

omklabtest.trafficmanager.net. IN A

ANSWER SECTION:

omklabtest.trafficmanager.net. 0 IN CNAME omktestapp-us.azurewebsites.net.

omktestapp-us.azurewebsites.net. 0 IN CNAME hosts.omktestapp-us.azurewebsites.net.

hosts.omktestapp-us.azurewebsites.net. 0 IN A 20.49.104.31

New York, US (routed to East US datacenter)

At the time of writing the Azure Traffic Manager costs $0.54/million DNS queries with additional costs for health checks peaking at $2/endpoint/month. If your web app is being served via 6 continents with over a million daily DNS queries, you pay approx ($2 x 6) + ($0.54 x 30) = $28.2/month. Check the pricing page for more details - Azure Traffic Manager pricing.

This summarizes what goes under the hood of services like Azure Traffic Manager and how DNS based load-balancing can help you build a global scale web application.

Have thoughts or questions? Drop a comment or email me at om[at]0x8.in.